Agentic AI is an artificial intelligence system that can accomplish a specific goal with limited supervision. It consists of AI agents—machine learning models that mimic human decision-making to solve problems in real time. In a multi-agent system, each agent performs a specific subtask required to reach the goal, and their efforts are coordinated through AI orchestration.

Unlike traditional AI models, which operate within predefined constraints and require human intervention, agentic AI exhibits autonomy, goal-driven behaviour and adaptability. The term “agentic” refers to these models’ agency, or their capacity to act independently and purposefully.

Agentic AI builds on generative AI (GenAI) techniques, using large language models (LLMs) to operate in dynamic environments. While generative models focus on creating content based on learned patterns, agentic AI extends this capability by applying generative outputs toward specific goals. A generative AI model like OpenAI’s ChatGPT might produce text, images or code.

Still, an agentic AI system can use that generated content to complete complex tasks autonomously by calling external tools. Agents can, for example, not only tell you the best time to climb Mt. Everest given your work schedule, but they can also book you a flight and a hotel.

What are the advantages of agentic AI?

Agentic systems have many advantages over their generative predecessors, which are limited by the information contained in the datasets upon which models are trained.

Autonomous

The most important advancement of agentic systems is that they allow for autonomy to perform tasks without constant human oversight. Agentic systems can maintain long-term goals, manage multistep problem-solving tasks, and track progress over time.

Proactive

Agentic systems provide the flexibility of LLMs, which can generate responses or actions based on nuanced, context-dependent understanding, while also offering the structured, deterministic, and reliable features of traditional programming. This approach allows agents to “think” and “do” in a more human-like fashion.

Adaptable

Agents can learn from their experiences, take in feedback, and adjust their behaviour. With proper guardrails, agentic systems can continuously improve. Multi-agent systems are scalable enough to eventually handle broadly scoped initiatives.

Intuitive

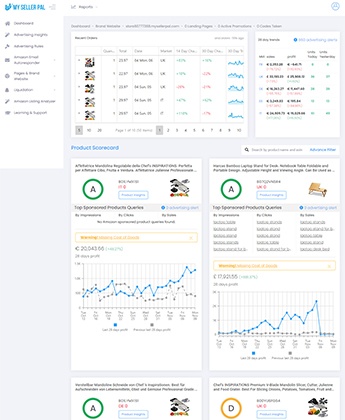

Because LLMs power agentic systems, users can engage with them with natural language prompts. This means that entire software interfaces, such as the many tabs, dropdowns, charts, sliders, pop-ups, and other UI elements involved in the SaaS platform of one’s choice, can be replaced by simple language or voice commands. Theoretically, any software user experience can now be reduced to “talking” with an agent that fetches the information one needs and takes action based on it. This productivity benefit can barely be overstated when one considers the time it takes for workers to learn and master new interfaces and tools.

How does agentic AI work?

Agentic AI tools can take many forms, and different frameworks are better suited to other problems, but here are the general steps that agentic systems take to perform their operations.

Perception

Agentic AI begins by collecting data from its environment through sensors, APIs, databases or user interactions. This step ensures that the system has up-to-date information to analyse and act upon.

Reasoning

Once the data is collected, the AI processes it to extract meaningful insights. Using natural language processing (NLP), computer vision, or other AI capabilities, it interprets user queries, detects patterns, and understands broader context. This ability helps the AI determine what actions to take based on the situation.

Goal setting

The AI sets objectives based on predefined goals or user inputs. It then develops a strategy to achieve these goals, often by using decision trees, reinforcement learning or other planning algorithms.

Decision-making

AI evaluates multiple possible actions and chooses the optimal one based on factors such as efficiency, accuracy and predicted outcomes. It might use probabilistic models, utility functions or machine learning-based reasoning to determine the best course of action.

Execution

After selecting an action, the AI executes it, either by interacting with external systems (APIs, data, robots) or providing responses to users.

Examples of agentic AI:

Agentic AI solutions can be deployed across virtually any AI use case in any real-world ecosystem. Agents can be integrated into complex workflows to perform business processes autonomously.

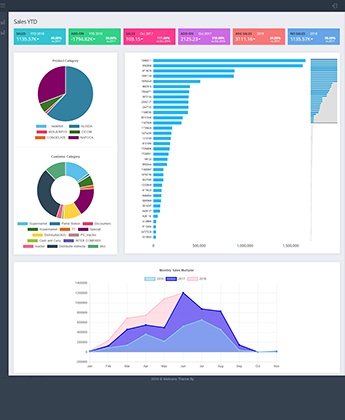

- An AI-powered trading bot can analyse live stock prices and economic indicators to perform predictive analytics and execute trades.

- In autonomous vehicles, real-time data sources such as GPS and sensor data can improve navigation and safety.

- In healthcare, agents can monitor patient data, adjust treatment recommendations based on new test results and provide real-time feedback to clinicians through chatbots.

- In cybersecurity, agents can continuously monitor network traffic, system logs, and user behaviour for anomalies that might indicate vulnerabilities to malware, phishing attacks or unauthorised access attempts.

- AI can streamline supply chain management through process automation and optimisation, autonomously placing supplier orders or adjusting production schedules to maintain optimal inventory levels.

Challenges for Agentic AI systems:

Agentic AI systems have massive potential for the enterprise. Their autonomy is their primary benefit, but this autonomy can have serious consequences if agentic systems go “off the rails.” The usual AI risks apply, but can be magnified in agentic systems.

Many agentic AI systems use reinforcement learning, which involves maximising a reward function. If the reward system is poorly designed, the AI might exploit loopholes to achieve “high scores” in unintended ways.

Consider a few examples:

- An agent tasked with maximising social media engagement that prioritises sensational or misleading content, inadvertently spreading misinformation

- A warehouse robot optimising for speed that damages products to move faster.

- A financial trading AI meant to maximise profits that engages in risky or unethical trading practices, triggering market instability.

- A content moderation AI designed to reduce harmful speech overcensors legitimate discussions.

Some agentic AI systems can become self-reinforcing, escalating behaviours in an unintended direction. This issue occurs when the AI optimises too aggressively for a particular metric without safeguards in place. And because agentic systems are often composed of multiple autonomous agents working together, there are opportunities for failure. Traffic jams, bottlenecks, and resource conflicts—all of these errors can cascade. Models need to have clearly defined, measurable goals, with feedback loops in place so they can move ever closer to the organisation’s intention over time.

Blazor

Blazor

Angular

Angular

ASP.NET Core

ASP.NET Core

NodeJS

NodeJS

React Native

React Native

60+

60+